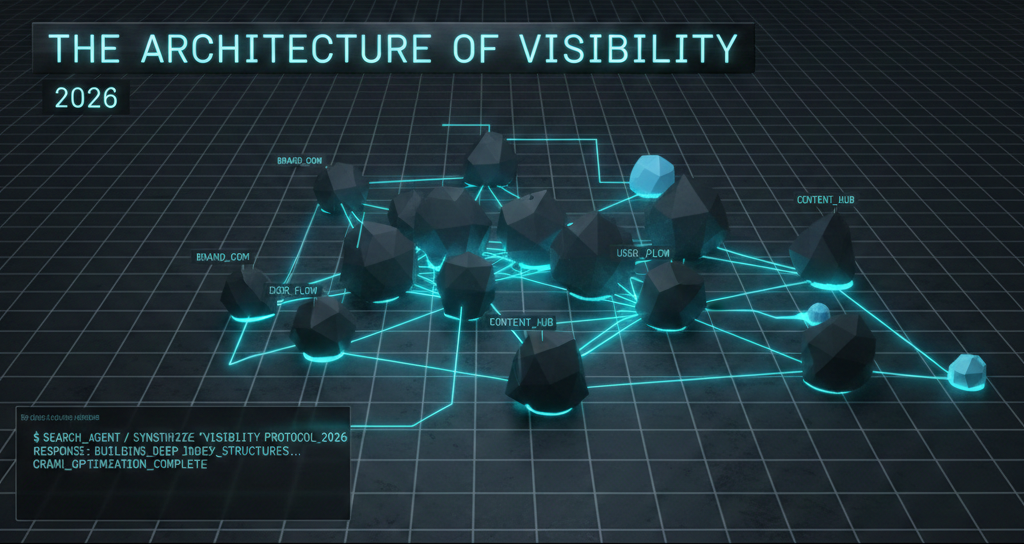

The Architecture of Visibility in the Age of Synthetic Search and Algorithmic Discovery: A Comprehensive Analysis of Generative Engine Optimization for 2026

The transition from a link-based search ecosystem to a synthesis-based information environment has fundamentally reconfigured the mechanics of digital findability. By February 2026, the traditional search engine results page (SERP) has undergone a significant overhaul, moving from a list of disparate sources to a unified, AI-generated overview that satisfies user intent without requiring a single click to external domains. This report examines the structural shifts in audience discovery, the technical requirements for machine-readability, and the strategic imperative for brands to move from ranking-centric models to recommendation-centric frameworks. The analysis identifies the specific factors that allow a brand to become a “default answer” in the synthetic search era, drawing on the most recent industry data and algorithmic benchmarks.

The Synthetic Search Landscape: Trends and Behavioral Shifts

The landscape in early 2026 is defined by the rapid adoption of generative search tools. Research indicates that approximately two-thirds of consumers now believe artificial intelligence will replace traditional search within five years, a sentiment already reflected in the $357\%$ year-over-year increase in referral visits from AI platforms such as ChatGPT, Gemini, and Perplexity. This shift is not merely a change in interface but a transformation of the customer journey itself.

The Rise of the Zero-Click Funnel

One of the most disruptive developments in the current search environment is the prevalence of zero-click searches. Data suggests that nearly $60\%$ of searches now conclude without a user clicking through to any website. This is driven by AI Overviews (AIO), which now appear in $99.9\%$ of informational queries and satisfy user intent directly on the results page. For brands, this necessitates a move toward influence-based metrics, where visibility is measured by mention frequency and citation authority rather than raw traffic volume.

| Metric | Traditional SEO (2020) | GEO/AEO Environment (2026) |

| Primary Goal | Position 1-3 Rankings | Inclusion in AI Summaries/Citations |

| Discovery Trigger | Keyword Match | Entity Relevance & Trust |

| User Behavior | Click-Through to Site | Information Synthesis in UI |

| Conversion Driver | Landing Page Design | AI Endorsement & Contextual Sentiment |

| Traffic Model | High Volume, Lower Intent | Lower Volume, High Intent (Late Funnel) |

The reduction in organic click-through rates is stark. Reports from mid-February 2026 show that organic search traffic has fallen by around $2.5\%$ year-on-year across $40,000$ monitored sites, with AI Overviews reducing click-through rates by up to $35\%$ when present. For competitive sectors like travel and financial services, informational queries that previously drove significant top-of-funnel traffic are now being fully synthesized by search agents.

The LLM Consistency and Recommendation Share (LCRS)

A new performance indicator has emerged to replace traditional ranking positions: the LLM Consistency and Recommendation Share (LCRS). This metric measures the percentage of responses in which a brand is mentioned or recommended across a series of non-deterministic prompts. Because LLMs are inherently non-linear and may produce different outputs for identical queries, marketers now rely on aggregate sampling to determine brand visibility.

Research shows that brand recommendations are highly inconsistent; there is a less than $1\%$ chance that ChatGPT or Google’s AI will provide the exact same list of brands if asked $100$ times. Therefore, the goal for 2026 is not “Position 1” but rather high “Share of Voice” across multiple iterations of intent-based queries.

Core Factors for AI Search Visibility: The Search Engine Land Framework

Capturing visibility in the synthetic era requires adherence to specific technical and content pillars. The recent analysis by Search Engine Land identifies five key factors that dictate whether a brand is cited by a generative engine. These factors align with the broader shift from “indexing” to “retrieval-augmented generation” (RAG).

1. Contextual Alignment and Entity Strength

AI models do not simply look for keywords; they map relationships between entities—people, brands, products, and concepts. Visibility is earned when a brand’s digital footprint is semantically dense and logically connected. This involves moving beyond surface-level content to create “Entity Moats,” where a brand is so strongly associated with a specific topic that AI models are forced to cite it as the primary authority.

Data from early 2026 suggests that $80\%$ of B2B technology buyers now trust generative AI as much as traditional search for supplier research, making “recommendability” the primary competitive advantage. To achieve this, brands must ensure their positioning is clear and unambiguous; vague messaging often results in the brand being “boxed” incorrectly by LLMs, leading to exclusion from relevant shortlists.

2. The Technical Infrastructure of Machine-Readability

For a brand to be visible, it must first be “retrievable.” This requires a technical foundation that caters specifically to AI crawlers like GPTBot, PerplexityBot, and ClaudeBot.

| Crawler Name | Originating Platform | Primary Function |

| GPTBot | OpenAI (ChatGPT) | Deep web crawling for model training and search |

| PerplexityBot | Perplexity | Real-time retrieval for citation-based answers |

| ClaudeBot | Anthropic | Content analysis for contextual synthesis |

| SearchBot | Google (AIO) | Indexing for generative overview generation |

A critical emerging standard is the implementation of llms.txt and llms-full.txt files. These files, placed in the root directory, act as a curated sitemap in Markdown format, guiding AI bots to the most important, fact-dense pages while avoiding the “noise” of traditional navigation menus. Furthermore, ensuring server-side rendering is non-negotiable; while traditional search engines have improved their JavaScript execution, many AI crawlers still struggle to parse content that is not immediately visible in the source code.

3. Factual Authority and the “Rule of 250”

AI models prioritize content that is specific, accurate, and grounded in human expertise. One of the most significant professional benchmarks in 2026 is the “Rule of 250”: it takes approximately $250$ substantial documents or publications across high-authority sites to meaningfully influence how an LLM perceives a brand. Brands that do not systematically document their expertise across every customer segment and use case risk having their narrative defined by competitors or generic AI hallucinations.

Expert-led content, backed by original research and proprietary data, serves as a primary trust signal. AI systems favor content that demonstrates firsthand experience—often identifying when content is written by a subject matter expert versus a generalist summarizing other sources.

4. Conversational Formatting and Retrieval Optimization

The way information is presented determines its “citation-friendliness.” Research indicates that $44.2\%$ of all LLM citations come from the first $30\%$ of a text. This has led to the adoption of the “Answer-First” methodology:

-

Direct Responses: Leading with a clear, concise answer within the first $50$ words of a section.

-

Structured Headings: Using headers that mirror real user questions to help models map content to user intent.

-

Extraction-Ready Sections: Creating self-contained blocks of information that AI can extract and use independently of the rest of the page.

-

Data Tables: Presenting hard facts, specifications, and pricing in Markdown tables, which increases the likelihood of being cited in comparison queries by up to $40\%$.

5. Social Verification and Off-Site Reputation

AI search visibility is not just a reflection of what is on a brand’s website; it is an aggregation of the brand’s entire digital footprint. Mentions in reviews, forums, and social media posts contribute to the “Confidence Score” an AI model assigns to a brand.

LinkedIn has emerged as a critical authority signal. Posts on LinkedIn can appear in AI search responses within minutes or hours, whereas website content may take weeks to influence LLM outputs. This immediate amplification makes a consistent social media presence essential for maintaining real-time relevance in AI discovery.

The Role of Structured Data and Schema in 2026

In the synthetic search era, schema markup is the primary language search engines and AI models use to interpret page intent and credibility. It is the “entry ticket” to the generative index.

Essential Schema Types for AI Visibility

Research from early 2024 showed that $92\%$ of URLs featured in AI answer summaries had valid structured data. By 2026, this has become a baseline requirement for any brand seeking to maintain market share.

| Schema Type | AI Search Benefit | Implementation Focus |

| Organization | Establishes entity identity | Use sameAs to link social profiles and Wikipedia |

| FAQPage | Increases “citable surface area” | Mark up question-answer pairs for direct retrieval |

| Author | Proves human expertise (E-E-A-T) | Link to professional bios and certifications |

| Product | Feeds AI shopping assistants | Include specifications, price, and availability |

| HowTo | Surfaced in instructional queries | Structure step-by-step guides for AI synthesis |

A 2026 benchmark by SchemaApp indicates that pages with proper schema implementation see a jump from $0\%$ to $40\%$ visibility in AI Overviews within weeks. The strategic implementation of FAQPage schema is particularly effective for captured “Ask Engine Optimization” (AEO) traffic, as it allows AI platforms to verify and cite sources with high precision.

Mechanizing Authority: The Shift to Automated SEO Software

The technical complexity of maintaining visibility across thousands of pages has rendered manual SEO unsustainable for modern agencies. The rise of machine-led systems marks a shift in agency operations, moving from writing tasks to managing automated workflows.

Scalability Through Machine-Led Optimization

Systems like NytroSEO have redefined the speed at which technical SEO can be deployed. These tools utilize an adaptive AI engine that understands website content and correlates it with metadata keywords and conversational search intent.

The primary advantage of such automated seo software is its ability to handle “autopilot” optimization for titles, descriptions, image alt texts, and link anchor texts across an entire site. For an seo agency software to be effective in 2026, it must support dynamic deployment—using JavaScript snippets to execute changes in real-time without requiring manual edits to the site’s CMS.

Comparative Analysis of Automation Tools

As digital marketing firms evaluate their tech stacks for 2026, the focus has moved toward tools that bridge the gap between traditional indexing and generative retrieval.

| Tool | Core Strength | AI Focus |

| NytroSEO | Automated Meta Tag & Schema | Optimized for conversational “AEO” queries |

| Alli AI | Technical Task Automation | Automated technical audits and site fixes |

| Semrush AI Visibility | Competitive Benchmarking | Share of Voice and Sentiment tracking in LLMs |

| Otterly AI | GEO Audit & Monitoring | Tracking citations across multiple LLM UIs |

| Surfer SEO | Content Topic Clustering | NLP-based topical authority building |

Agencies that adopt seo automation tools report significant time savings, allowing them to focus on the high-level strategic tasks that machines cannot yet replicate: relationship building, creative storytelling, and ethical narrative control.

Social Media Manager – Strategic Deliverables for AI Visibility

The following section provides the practical outputs for the Social Media Manager handling the Search Engine Land article on AI search visibility. These outputs are designed for LinkedIn, adhering to the requested professional, insightful, and tech-forward tone while strictly avoiding the prohibited AI-sounding terms in the commentary.

Measuring Success in the Synthetic Funnel

The metrics for visibility in 2026 have moved away from vanity numbers like raw impressions and toward “Brand Archetype Classification” and “Sentiment Polarity”.

New Key Performance Indicators (KPIs)

To accurately track performance, CMOs and digital marketers must monitor a new suite of metrics that reflect the AI-mediated nature of discovery.

-

Share of Model: The frequency with which a brand is mentioned in AI-generated answers compared to its competitors.

-

Citation Authority: The consistency with which an AI model links to a brand’s website as the primary source for a factual claim.

-

Prompt Impact: The effectiveness of a brand’s content in providing direct answers to specific natural language questions.

-

Sentiment Polarity Analysis: Tracking the tone—positive, neutral, or negative—that AI engines associate with a brand across different query contexts.

-

AI Share of Voice (AI SOV): A comparative metric that shows which brand dominates the initial “shortlist” generated by AI search agents.

Data suggests that visitors arriving from AI recommendations convert at a rate $4.4\times$ higher than those from traditional search. This is because the AI assistant has already performed the initial vetting and research phase, delivering a highly qualified lead that is ready to act.

Tools for Multi-Platform Visibility Tracking

The fragmentation of the search market—where ChatGPT, Gemini, Perplexity, and Claude all favor different source types—requires specialized monitoring tools.

| Tool | Focus | Monitoring Depth |

| SE Visible | Multi-engine visibility | Real AI answers tracking; sentiment analysis |

| HubSpot AEO Grader | Multi-platform analysis | Brand archetype and sentiment scoring |

| Ziptie.dev | Prompt-level monitoring | Tracks thousands of LLM interactions monthly |

| Ahrefs Brand Radar | Global SOV | Searches billions of prompt indexes automatically |

| Mention Network | Topic-driven mentions | Identifies which topics trigger brand recommendations |

The Human Element: Why E-E-A-T Still Wins

While the technical side of visibility focuses on machines, the content itself must remain focused on people. Search engines and AI models are increasingly sophisticated at distinguishing between generic, “noise-like” AI content and content grounded in real-world experience.

Brand Voice as a Ranking Signal

In 2026, “generic” is a death sentence for visibility. If content sounds like everything else on the web, AI models treat it as background noise during the synthesis phase. Earning a space in an AI summary requires having a unique perspective or “Value-Added Friction”—information that only a human with specific experience could provide.

The Credibility of the Founder-Led Profile

A significant trend in LinkedIn marketing for 2026 is the rise of the “Founder-Led” profile. Brands that open up “behind the scenes” and share authentic human stories earn higher trust scores from both users and algorithms. These human signals act as a powerful counterweight to the flood of low-effort AI content, ensuring that the brand remains a credible entity that search agents feel safe recommending.

Navigating the Future of Agentic Discovery

The redefinition of search in 2026 is not an end to visibility but a move toward a more structured and authoritative digital landscape. For brands and agencies, the path forward is clear: technical foundations must be systematized through automation, while content must be deepened through human expertise. By focusing on the five factors of AI visibility—context, infrastructure, authority, formatting, and social proof—organizations can ensure they remain the primary sources of truth in a world increasingly governed by synthetic answers. The winner in this new era is not the brand with the most content, but the brand with the most trusted, citable knowledge.

AI search visibility FAQ

What is AI search visibility?

AI search visibility refers to how often and how accurately a brand appears within AI-generated responses across synthetic search platforms. Unlike traditional Search Visibility, AI search visibility measures inclusion, citation, and recommendation inside conversational AI results, directly impacting ai visibility and Brand Visibility.

What is an ai search visibility strategy?

An ai search visibility strategy is a structured approach to improving ai search visibility by optimizing content, entities, and authority signals so AI systems can interpret and surface your brand accurately. A strong ai search visibility strategy supports broader ai visibility and strengthens overall Search Visibility across AI-driven discovery environments.

How does ai visibility differ from traditional Search Visibility?

ai visibility focuses on how AI systems reference and recommend your content in synthesized answers, while Search Visibility traditionally measures rankings and clicks on search engine results pages. Improving ai visibility requires structured content, semantic clarity, and branding and visibility alignment to ensure inclusion in AI-generated outputs.

Why is Brand Visibility important for ai search visibility?

Brand Visibility plays a critical role in ai search visibility because AI systems rely on recognized entities and trusted sources when generating answers. Strong branding and visibility across authoritative channels improves ai visibility and increases the likelihood that your brand will appear in AI-driven Search Visibility environments.

How do branding and visibility efforts improve ai visibility?

branding and visibility initiatives such as consistent messaging, authoritative content, and digital entity optimization directly enhance ai visibility. When branding and visibility are aligned with an effective ai search visibility strategy, businesses improve both Search Visibility and long-term Brand Visibility in AI-powered search ecosystems.