A single overlooked line in your robots file can stealthily sabotage your entire website’s visibility. Even a minor error means that the most important pages, your key landing pages, product features, blogs, or lead magnets, could vanish from search results. These pages won’t show up in Google, Bing, or newer AI-driven search engines. Instead of slow, steady growth, you might suddenly see traffic drop off without any obvious reason. Most site owners, marketing teams, and agencies never notice this technical issue until after real business is lost, with missed sales, fewer new leads, and brand invisibility. This threat is subtle, but the results can be devastating.

Even technical teams can miss a robots file error, since it rarely triggers warnings or alerts. Your analytics dashboard may show puzzling drops in visits, but the underlying cause can hide for weeks or months. The fallout isn’t just about outdated rankings, it’s about every day your pages aren’t indexed by search engines or picked up by AI apps, you’re losing new customers.

Don’t leave your site vulnerable to a hidden robots file mistake. Find out instantly if your pages are visible and fix issues before traffic disappears. Explore the system for Free

Your Robots File: The Unseen Gatekeeper to Your Business Online

Most digital teams underestimate just how vital a robots file is to their overall success. Think of it as a silent gatekeeper. When it’s set up correctly, it quietly allows search engines and AI tools to browse your content, discover your offers, and bring in new business. Yet, when a simple error goes unnoticed, it works against you, locking out the very sources of organic traffic you depend on for growth.

The challenge? Robots file issues are rarely obvious. They’re not like a server outage or broken checkout, there’s no glaring red alert. Instead, the effects add up quietly over time, draining your momentum. Pages get left out of Google’s index, new launches fall flat, and your best ideas never make it to potential buyers or clients using AI-driven discovery tools. In today’s fast-moving digital environment, ignoring the robots file is a gamble no business can afford.

How a Robots File Error Silently Blocks Business Growth

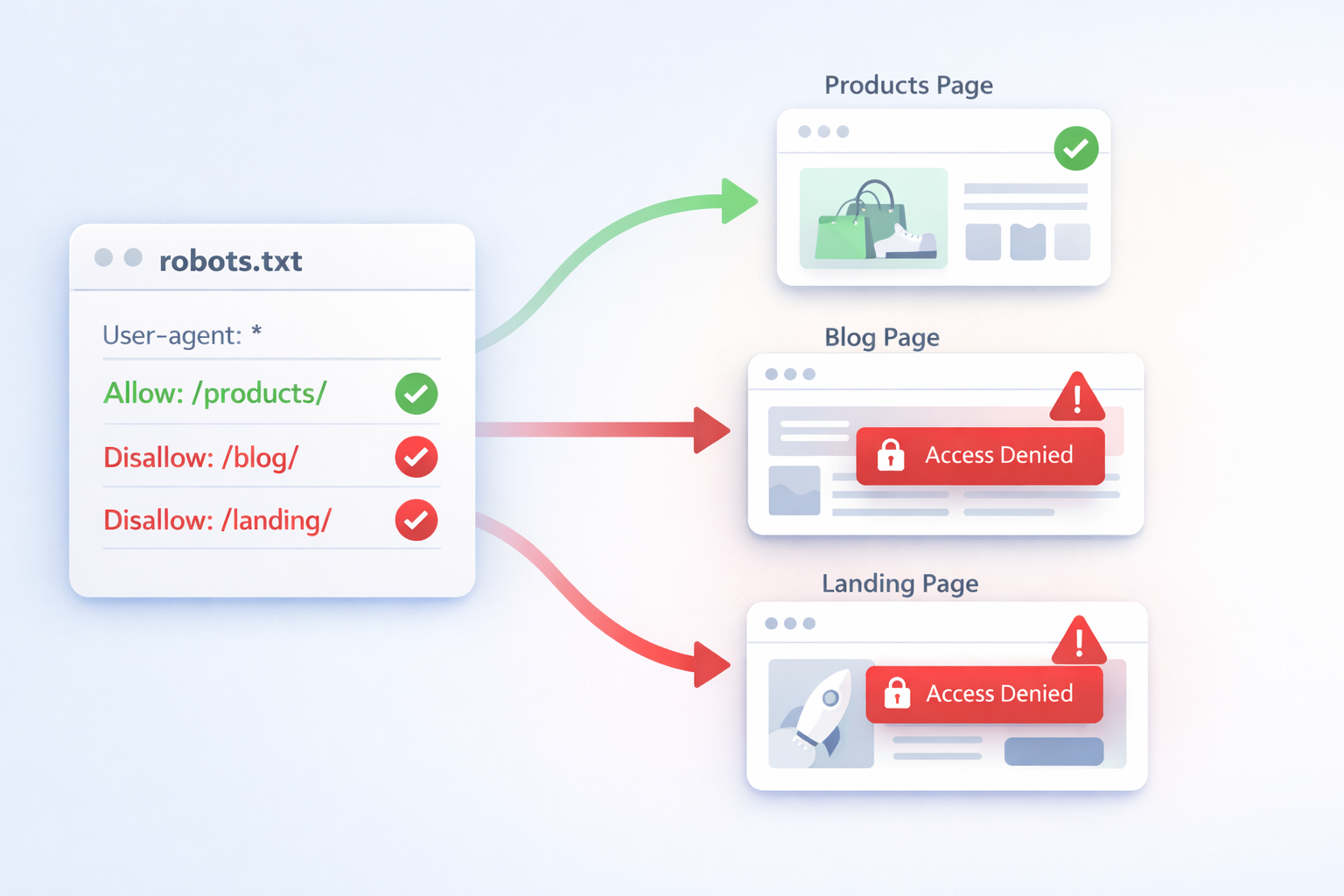

Problems arise when even a well-meaning update to the robots file contains the wrong entry or a forgotten “Disallow” directive. A single misplaced line can stop search engines from crawling your most valuable pages. For example:

- A wildcard entry like Disallow: / can block your entire site from being indexed.

- Blocking “/products/” might unlist all of your shop’s top products.

- Accidentally disallowing “/blog/” could mean losing all organic traffic to your content.

These issues often start small. Maybe a developer leaves a temporary block during site maintenance. Maybe an agency adds a rule to hide test pages and forgets to remove it. Sometimes, changes made for site speed or security, like adding an SSL certificate or new subdomains, disrupt how bots access your robots file. Either way, search engines see the block and obey it, often without a warning. Business leaders, content creators, and marketers only realize when traffic declines or new pages never show up in results.

Most teams discover robots file problems when the damage is already done, indexed pages drop, search visibility plummets, and it becomes a scramble to recover lost ground.

What’s Actually Happening When a Robots File Blocks Your Pages

- Blocked pages (in the robots file) tell search engines not to visit those URLs.

- If a search engine’s bot can’t reach a page, it won’t crawl it.

- A page that isn’t crawled can’t be added to the index, no matter how good the content or meta tags.

- If a page isn’t in the search index, users can’t find it on Google or newer ai apps like Perplexity or ChatGPT internet search.

- With fewer indexed pages, your site’s search visibility shrinks fast, covering not just Google and Bing but also organic ai search visibility, traffic from AI-powered search, featured snippets, or voice queries.

- Assets (like images, javascript, or CSS) unintentionally blocked by the robots file can also impact site speed and page rendering, making your site less accessible across devices and platforms.

For example, imagine a business that launches a series of new product pages or answers dozens of customer questions in a knowledge base. These should be discoverable, but someone adds a “Disallow: /products/” or “Disallow: /help/” to the robots file. Weeks go by and those investments draw no clicks. The site looks fine to regular visitors, but AI apps and search engines simply never see those new pages. Marketing teams tweak headlines and product copy, but the underlying crawl block keeps their work hidden from the world.

This process quietly unfolds. Most on-page tools won’t detect it. Google Search Console may not always send a specific alert, especially for new or dynamic pages. By the time you realize, you’re dealing with lost leads and disappearing rankings.

Adding to the complexity, search engine algorithms and AI-powered platforms such as SGE, ChatGPT, and Perplexity are updating at an ever-faster pace. These sophisticated mechanisms rely on up-to-date, visible content for indexing and recommending results to users, across both standard queries and conversational searches. If your robots file restricts their access, your company’s insights, competitive offers, and industry expertise effectively don’t exist in the eyes of modern discovery tools. Even if you’re investing heavily in SEO talent, content, and digital strategy, a blocked robots file undercuts all that work. This is why regular monitoring and intelligent automation are no longer optional. They’re a foundational piece of digital success for agencies, SMBs, and fast-growing challenger brands alike.

The Business Impact: Traffic, Leads, and AI Discoverability Lost

Search Visibility

When a robots file blocks critical URLs, your search visibility suffers immediately. Those pages vanish not only from Google’s traditional results but also from AI-driven platforms where being indexed is essential for discoverability. Even if you run the best on-page SEO, your content simply doesn’t appear because it isn’t indexed in the first place.

Indexed Pages

Sites work hard to turn out high-value content, landing pages, product updates, or service explainers. Block them in the robots file, and they’ll never be seen, even if they exist on your site. For agencies, this can mean entire client campaigns becoming invisible overnight. For SMBs, months of content creation can be wiped out by a forgotten directive.

Website Traffic and Leads

Fewer indexed pages mean fewer entry points for organic traffic. A blocked robots file isn’t just a ranking issue, it’s a revenue risk. Contact forms, downloads, product listings, and seasonal offers might all be hidden from the people searching for them. For most businesses, this translates into fewer leads, lower sales, and missed revenue goals. Agencies often inherit sites where the problem has simmered, meaning they fight uphill just to regain lost visibility.

AI Discoverability and Future-Focused Search

AI apps, voice assistants, and search engines leveraging large language models feed on indexed site data. If your robots file keeps these bots out, your content is excluded from the results powering AI conversations and answers. In a world of ai-powered search, not being indexed means you’re not part of the discussion, your expertise, product, or service gets ignored by the next wave of searchers.

Lost Opportunities

Every time a search query goes unanswered because your page wasn’t indexed, that’s an opportunity given away to a competitor. Whether it’s an enterprise landing page, a local service page, or a viral blog post, visibility is everything in search-driven discovery.

The Solution: Safeguard with NytroSEO and AI SEO Software

The risks of a robots file mistake are too high, but the fix doesn’t need to be complex. NytroSEO provides a simple, effective way to monitor and prevent these costly errors, designed for SMBs, agencies, and technical teams who can’t afford surprises.

What NytroSEO Brings to the Table:

- Continuous robots file monitoring: The platform actively scans your site’s robots file and checks permissions to ensure nothing is blocked unintentionally.

- AI SEO software advantage: By leveraging the power of automated AI, NytroSEO checks for common missteps or risky patterns that might otherwise go unnoticed.

- Real-time alerts and guidance: If a disallow directive might hurt your indexed pages or overall search visibility, you get immediate, actionable recommendations.

- AI apps and organic AI search visibility: NytroSEO simulates how not only search engines, but also modern AI apps, access your site, identifying gaps in visibility that classic tools miss.

- Automatic crawl and indexability tests: NytroSEO compares your expected indexed pages against what search engines actually see, revealing discrepancies instantly.

- No extra technical burden: The system works via a simple snippet with no heavy tracking, and it also takes into account other key factors like site speed and the presence of a valid SSL certificate to avoid secondary issues that can block bot access.

You can stop worrying about hidden accidental blocking and keep your content, products, and brand visible to both traditional search engines and the latest AI-powered platforms.

NytroSEO also fits seamlessly into any existing site stack. Whether you manage a WordPress blog, Shopify storefront, custom enterprise site, or multi-language international portal, this ai seo software provides rapid diagnostics without technical disruption. Agencies and teams save hours of manual analysis, while non-technical site owners stay protected from the risks of invisible blocking. Explore the system for Free

How It Works: NytroSEO Robots File Monitoring in Action

Keeping your pages open to search engines and AI platforms demands more than a static checklist. NytroSEO’s approach is clear, step-based, and easy for non-technical users:

Analyze the robots file

NytroSEO automatically scans the robots file at the root of your website. It reads the file exactly as search engines and AI scrapers do.

Identify blocked pages and resources

The system flags any “Disallow” lines or regex patterns that restrict important sections, like /products/, /blog/, or /help/, and notes any resources (JS/CSS/images) that could inadvertently limit page rendering or site speed.

Compare indexed pages vs expected pages

NytroSEO checks your sitemap and page inventory, cross-checking it with what’s actually indexed by major search engines and AI apps. If important pages aren’t being indexed, the system alerts you to mismatches and potential robots file issues.

Simulate how search engines and AI apps access the site

Modern search platforms aren’t just browsers, they include AI chatbots and new discovery engines. NytroSEO mimics these visits, revealing whether your content is accessible in both traditional and organic ai search visibility scenarios.

Provide clear fixes and recommendations

If the platform detects risky rules or uncrawled pages, it supplies simple, actionable advice. This includes removing unintended directives, updating your SSL certificate for bot compatibility, improving site speed for better crawl efficiency, and sending reminders when your robots file needs an update.

The result? Your team sees what bots and AI apps see, before a business-impacting mistake can cost you.

Additionally, NytroSEO’s monitoring covers other often-linked technical risks. For example, changes to your SSL certificate or CDN configuration sometimes unintentionally limit bot access, not just for search engines but also for AI-driven tools scraping and analyzing your public content. NytroSEO checks for these hidden obstructions, providing a holistic safeguard that goes beyond the basics. Even tweaks for site speed, web hosting, or security plugins can create conditions where your robots file is bypassed or replaced. With NytroSEO, your visibility keeps pace with the evolving complexities of digital infrastructure.

Why Hidden Robots File Mistakes Demand Immediate Action

Time is a factor. Every day your pages sit blocked in the robots file, you’re invisible to both traditional and new-wave search platforms. The longer this silent error persists, the more ground you lose, to competitors, to changing search algorithms, and to evolving AI-driven discovery engines. Missing a robots file mistake isn’t just a technical misstep. It’s a business oversight with lasting cost.

NytroSEO makes it fast and low-risk for any team to validate site visibility, restore blocked indexed pages, and future-proof your search presence, no matter how your site changes or grows. Whether you manage dozens of clients, a growing e-commerce shop, or an authoritative content site, visibility begins with an open door for search engines and AI to read your content.

Don’t let a simple configuration error silence your business online. Take the first step to full search visibility today.

FAQ: Robots File and Indexed Pages

What is a robots file and how does it affect indexed pages?

A robots file, also known as robots.txt, is a file located at the root of your website giving instructions to search engine bots on which parts of your site to crawl or skip. If key areas are blocked, those pages may not become indexed pages in search engines, causing a direct loss of search visibility.

Can an error in my robots file hurt organic ai search visibility?

Yes, an error in your robots file can stop AI apps, as well as classic search engines, from discovering key content. This reduces organic ai search visibility, restricting your site from being found in both conventional search results and AI-driven answers.

How can NytroSEO’s ai seo software detect robots file mistakes?

NytroSEO’s ai seo software scans your robots file, detects harmful blocking rules, and compares your indexed pages against expectations. This approach ensures nothing prevents your site from being seen by people or AI apps.

Does a robots file block impact site speed or SSL certificate status?

While the robots file itself doesn’t directly affect site speed or your SSL certificate, blocking assets or critical files in the robots file can slow down how pages load, affecting crawlability. Sometimes, changes like implementing a new SSL certificate can alter how robots.txt is served, so regular checks are important.

Why do indexed pages disappear from search visibility so suddenly?

Indexed pages can disappear when a robots file mistakenly blocks URLs after a website change, plugin update, or server migration. Because search engines respect these blocks, those pages drop from search visibility as soon as bots recrawl your site. Regular monitoring is essential to prevent this silent loss.